Top 10 World-class Data Transformation Tools for 2024

- Ndz Anthony

- November 27, 2023

In an era where data is a critical asset for any organization, keeping up with the latest tools for data transformation is essential. This article presents an updated list of the top 10 data transformation tools for 2023, each selected for their ability to efficiently manage and transform data.

These tools are vital for organizations looking to optimize their data processes and gain valuable insights in a constantly evolving technological landscape.

Brief List of Data Transformation Tools

Before we delve deeper, here’s a quick list of the tools we’ll be covering:

- Apache NiFi

- Talend

- Datameer

- Informatica PowerCenter

- AWS Glue

- dbt (Data Build Tool)

- Fivetran

- Matillion

- Google Cloud Dataflow

- Alteryx (including Trifacta)

In-Depth Review:

In our review of top data transformation tools, we’ll focus on several key aspects: their primary data transformation capabilities, unique features that distinguish each tool, their ideal user profiles, and notable limitations. This approach will provide a clear and concise understanding of what each tool offers and its best fit in the data transformation landscape.

Top 10 world-class data transformation tools for 2024

1. Apache NiFi:

- Data Transformation: Customizable data routing, transformation, and system mediation with a visual approach.

- Unique Feature: Flexible dataflow design with a user-friendly drag-and-drop interface.

- Standout Aspect: Exceptional at handling data provenance and real-time data tracking.

- User Profile: Best suited for data engineers and system integrators dealing with complex, high-volume data streams.

- Biggest Con: Complexity in setup and management, requiring significant expertise in data flow management.

2. Talend:

- Data Transformation: Provides a wide range of tools for data integration, transformation, and quality management.

- Unique Feature: Combines data integration and data quality processes.

- Standout Aspect: Open-source core with a broad community, offering extensive connectivity.

- User Profile: Data engineers and business analysts looking for a comprehensive, flexible solution.

- Biggest Con: The learning curve can be steep, and full functionality often requires the paid version.

3. Datameer:

- Data Transformation: Offers versatile data transformation with both no-code and SQL capabilities, ideal for diverse skill levels.

- Unique Feature: Features real-time data previews and a user-friendly interface for seamless team collaboration.

- Standout Aspect: Native Snowflake integration ensures efficient data governance and minimizes data duplication.

- User Profile: Best for data engineers, administrators, and analysts seeking a flexible, powerful, and user-friendly tool.

- Biggest Con: Specialization in Snowflake may restrict flexibility in diverse cloud environments.

- Future Developments (2024): Plans for enhancements in data quality, job management, cost control, and auto documentation.

Datameer Documentation | Datameer Pricing | Datameer Quick start guide

4. Informatica PowerCenter:

- Data Transformation: Known for robust ETL capabilities and handling large-scale, complex data integration.

- Unique Feature: High-performance engine and metadata-driven approach.

- Standout Aspect: Strong enterprise focus with robust data governance and management capabilities.

- User Profile: Large enterprises and data professionals needing a scalable solution for complex environments.

- Biggest Con: Can be expensive and may require specialized training to use effectively.

5. AWS Glue:

- Data Transformation: Fully managed ETL service that automates data preparation for analytics.

- Unique Feature: Serverless data integration service that automatically discovers and prepares data.

- Standout Aspect: Deep integration with AWS services, ideal for AWS-centric environments.

- User Profile: Data engineers and analysts in AWS environments focusing on cloud-based data transformation.

- Biggest Con: Limited to AWS ecosystem, which can be a constraint for non-AWS users.

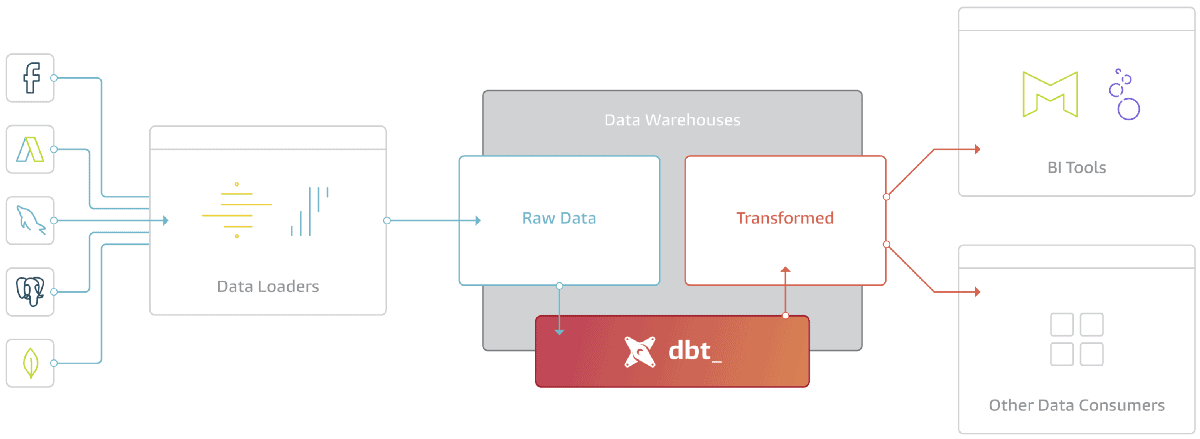

6. dbt (Data Build Tool):

- Data Transformation: Specializes in transforming data in-warehouse using SQL.

- Unique Feature: Transforms data using SQL, enabling analytics engineers to use familiar language.

- Standout Aspect: Emphasizes version control and testing, bringing software development best practices to data transformation.

- User Profile: Data analysts and engineers comfortable with SQL and looking for in-warehouse transformations.

- Biggest Con: Primarily SQL-focused, which might limit its use for non-SQL proficient users.

7. Fivetran:

- Data Transformation: Automates data integration from various sources into a data warehouse.

- Unique Feature: Fully automated, zero-maintenance pipeline with minimal setup.

- Standout Aspect: Focus on replicating all data into a single warehouse, ideal for straightforward data consolidation.

- User Profile: Businesses and data teams needing automated, hassle-free data integration.

- Biggest Con: Limited transformation capabilities compared to more comprehensive ETL tools.

8. Matillion:

- Data Transformation: Cloud-native ETL tool for high-speed data transformation in cloud environments.

- Unique Feature: Designed specifically for cloud data warehouses with high-speed capabilities.

- Standout Aspect: Rich set of pre-built components and connectors for rapid development.

- User Profile: Data engineers in cloud-centric organizations, especially those using Redshift, BigQuery, and Snowflake.

- Biggest Con: Primarily focused on cloud environments, which might not be ideal for on-premises scenarios.

9. Google Cloud Dataflow:

- Data Transformation: Manages stream and batch data processing in the Google Cloud ecosystem.

- Unique Feature: Optimized for complex event processing with seamless integration with Google Cloud services.

- Standout Aspect: Auto-scaling and performance optimization for large data volumes.

- User Profile: Data engineers and developers in Google Cloud environments.

- Biggest Con: Tightly coupled with Google Cloud, limiting its utility outside this ecosystem.

10. Alteryx (including Trifacta):

- Data Transformation: Intuitive workflow for data blending, preparation, and analytics.

- Unique Feature: Accessible interface for both technical and non-technical users.

- Standout Aspect: Strong in self-service analytics, enabling complex data analysis without deep technical knowledge.

- User Profile: Data analysts, business analysts, and non-technical users.

- Biggest Con: Can be expensive, and the learning curve for advanced features.

Choosing the Best Tool for Your Organization:

Before completing the list, do consider these quick tips for selecting the best data transformation tool for your organization or company:

- The quantity of data you have and how it is kept.

- Your current data infrastructure.

- Identify the people who use your data. Are they mostly engineers and scientists, or do you have citizen data integrators, scientists, and business users?

- Your financial situation.

- Your application scenarios.

- Ease of installation and use.

- Security and adherence to local and regional regulatory requirements.

- Reviews and testimonies on renowned review sites such as Gartner, G2, and Capterra.

Rethink Data Transformation with Datameer.

Datameer is an essential tool for any company that uses Snowflake for data processing, storage, and analytics. Financial services, telecommunications, healthcare, retail, travel & hospitality, and energy companies are all adopting Datameer to transform data and improve analysis and collaboration.

Book a quick call with us, and we’ll get you set up ASAP!